Integrating LLMs and Decision Services

“LLMs are truly a transformative technology. They can play an important role, allowing humans to interact with computers. But they cannot make decisions (that is, choose the best from a set of choices according to some set of metrics).” Prof Warren Powell

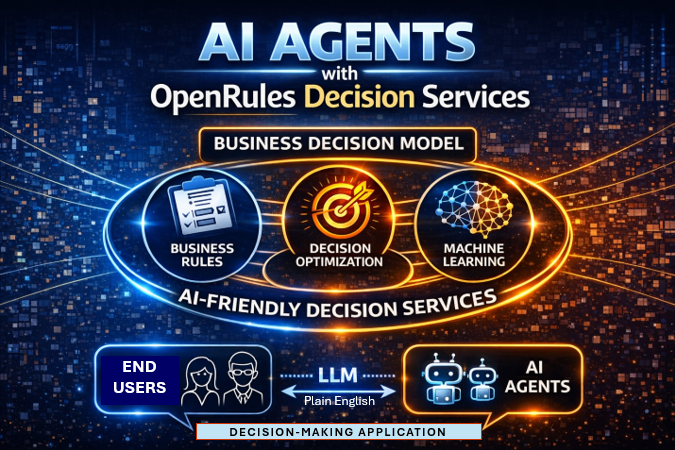

When making critical business decisions using AI Agents, it is essential to keep powerful — yet often unpredictable — generative AI on a controlled and reliable path. This can be achieved by guiding large language models (LLMs) to leverage predictable decision intelligence tools such as business rules, machine learning, and optimization. OpenRules addresses this need by enabling customers to automatically deploy decision models as intelligent AI-friendly decision services, seamlessly accessible by leading LLMs such as ChatGPT and Claude.

By combining business rules, machine learning, optimization, and AI agents, OpenRules empowers customers to build smarter, more adaptive decision-making systems—all within a unified decision intelligence platform.

All necessary tools, resources, and prompts are automatically derived directly from decision models and assembled into fully deployable decision services, ready to function as AI agents — with no coding or manual configuration required. A single URL containing the automatically generated service description is all that needs to be passed to the LLM of your choice. From there, a subject matter expert simply engages in a plain English dialogue with the LLM to identify the correct decision for any given problem, seamlessly invoking the underlying OpenRules-based decision services.

Throughout this dialogue, the LLM does the following:

- Determines which tool to use and when, guided by the available prompts

- Automatically generates and dispatches JSON-formatted requests to the appropriate decision service for execution

- When input data is incomplete, the LLM prompts the user accordingly and proceeds to execute all related decisions and sub-decisions

- Provides transparent explanations of which tools were invoked and why, rendering technical logic in plain English

- Transforms all decision outputs into natural language responses, capturing both the result and the underlying reasoning.

Thus, using LLMs with decision models deployed as AI-friendly agents, OpenRules supports a tool-augmented, decision-making LLM workflow that implements the autonomous reasoning loop:

Understand → Decide → Act (tool) → Interpret → Explain

where Act (tool) is an OpenRules-based decision service.

Here’s a step-by-step explanation of what’s happening:

1. Deciding Which Tool to Use

The LLM reads the user’s input and figures out what kind of task it is:

- Is it a question? → answer directly

- Needs external data? → use a search tool

- Needs to find an optimal or feasible decision? → use the connected decision services (tools)

It uses its internal reasoning to select the most appropriate tool.

2. Generating Tool Requests (JSON)

Once a decision tool is chosen, the LLM:

- Builds a structured request (usually JSON)

- Sends it to the chosen tool

3. Handling Missing Information

If the input is incomplete, the LLM:

- Pauses and asks clarifying questions, OR

- Makes reasonable assumptions

Then it continues the workflow, making all necessary sub-decisions along the way.

4. Explaining Tool Usage (Transparency)

A well-designed system will:

- Explain which tool was used

- Explain why it was needed

This turns hidden system behavior into something the user can understand.

5. Converting Everything into Natural Language

Finally, the LLM:

- Takes raw outputs (data, results, API responses)

- Combines them with its reasoning

- Produces a clear, human-readable answer.

Every OpenRules decision model your team has built is ready to become an AI agent — automatically, with no additional code.

Real Example

Let’s consider how to convert a pure rule-based decision model, “Patient Therapy“, into an LLM-based dialogue. An LLM orchestrates three loosely coupled medical services to help a physician determine the appropriate therapy for a patient with Acute Sinusitis. Link

Additionally, OpenRules allows you to deploy decision models as MCP servers, which can also be seamlessly accessed by any LLM.