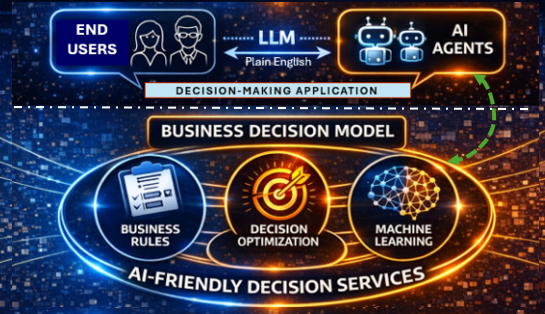

LLMs aren’t decision-makers — at least not yet. But they’ve become surprisingly effective translators between natural language and domain-specific languages, opening the door to decision services built on more traditional rule engines, optimization solvers, and machine learning tools.

OpenRules turns decision models into intelligent, AI-friendly decision services — ready to work seamlessly with leading LLMs like ChatGPT and Claude. Tools, resources, and prompts are automatically generated from the model itself and bundled into a fully deployable service, with zero coding or manual configuration.

Every OpenRules decision service is AI-ready out of the box, automatically generating two descriptor files:

- description.md

- schema.json

These files are produced directly from the decision model’s glossaries, primarily describing its inputs and outputs. Users may also optionally include a DecisionModelDescription table to provide the LLM with additional plain-English context about the model. As an example, the “Loan” decision model leverages this table to direct its underlying optimization-based service to apply a problem-specific solution method.

OpenRules customers can continue using their existing decision services independently of any LLM. At the same time, without any modifications, they can connect those services to an LLM — gaining a natural language interface or, at a minimum, a powerful QA tool.

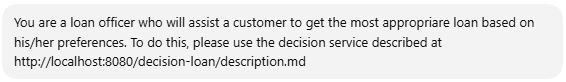

To connect an LLM to an OpenRules decision service, simply enter the service’s endpoint URL — appended with “/description.md” — directly into the LLM dialog. For example, to configure an LLM to act as a loan officer using the deployed constraint-based decision service “decision-loan” (implemented in the decision model “Loan“), you might enter something like the following:

You may analyze various examples of AI agents by selecting them from the bar on the right. The AI agents “PatientTherapy” and “VacationDays” are based on business rules. Similarly, you may organize LLM-driven dialogs with any other OpenRules decision models included in the standard installation “openrules.samples” or your own decision models.

The AI agents “Loan“, “Inside/Outside Production”, “Web Features”, and “Burger” provide examples of LLM-driven dialogs that help end users to select the most appropriate decisions, adding their own additional constraints (not included in the decision model) on the fly. They demonstrate how LLM empowers users without technical expertise to deal with complex optimization problems.

LLMs are fundamentally transforming the orchestration of decision services. They enable end users to interact with existing decision services in a natural, flexible way — without any custom-built interfaces or rigid workflows. When deployed decision services expose their own descriptions, including their inputs and outputs, an LLM can analyze this information and automatically invoke the appropriate services, guiding the entire interaction through a plain English conversation with the user.

By combining business rules, machine learning, optimization, and AI agents, OpenRules empowers customers to build smarter, more adaptive decision-making systems—all within a unified decision intelligence platform.